BF16

AccuracyBaseline recipe using bfloat16 GEMMs for maximum numerical accuracy. No quantization.

- Best when stability matters most

- Great for validation baselines

The open-source AgentOps platform. Train and fine-tune models with a native C++/CUDA engine — then build, deploy, and operate autonomous AI agents on your own infrastructure.

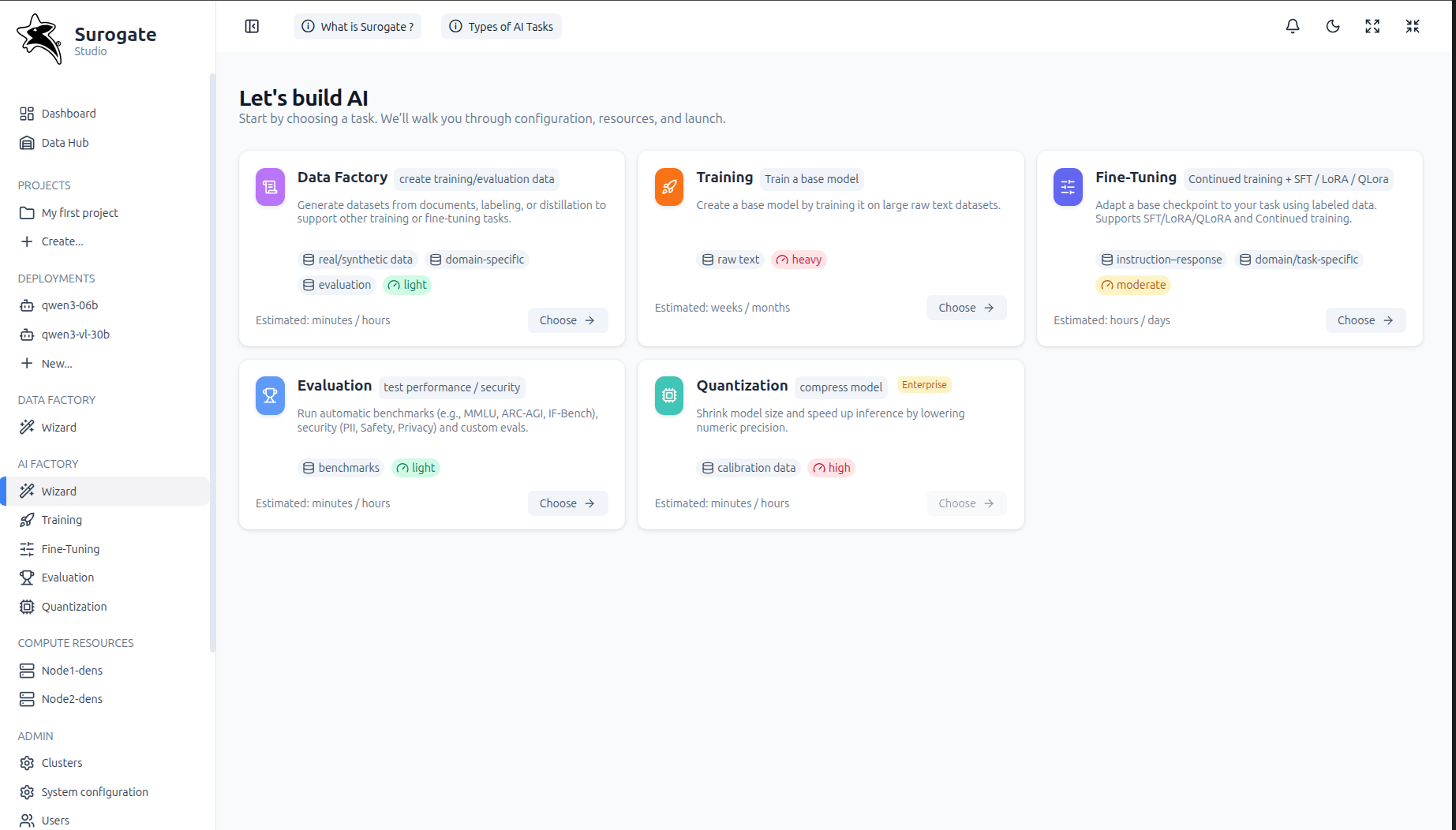

What Surogate does

Near hardware-limit training

A native C++/CUDA engine that pushes your NVIDIA GPUs to their practical limits — from single-GPU rigs to multi-node clusters.

Autonomous agent runtime

Compose agents from skills, tools, MCP servers, and sub-agents. Agents reason, plan, and execute complex multi-step workflows.

Closed improvement loop

Production traces become training data. Fine-tune Specialized Language Models from agent trajectories. Agents get better automatically.

Full agent observability

Complete execution traces for every agent run — every LLM call, tool invocation, sub-agent step, and failure. Visual trace viewer included.

Self-hosted, full control

Runs on Kubernetes. Your infrastructure, your models, your data. On-premise or cloud. Air-gap capable for regulated environments.

Surogate is the open-source AgentOps platform by Invergent. It combines a high-performance LLM training engine with a full autonomous agent runtime — so you can train the models that power your agents, and operate those agents at production scale, all in one self-hosted system.

The training core is built in native C++/CUDA, engineered for near hardware-limit throughput on modern NVIDIA GPUs. The agent layer runs on Kubernetes, with full execution tracing, skill lifecycle management, and a continuous improvement loop from production data.

Choose a precision recipe that matches your hardware and goals — from maximum numerical stability to maximum SOL.

Baseline recipe using bfloat16 GEMMs for maximum numerical accuracy. No quantization.

Native FP8 training (E4M3 for weights & activations, E5M2 for gradients) with delayed scaling for stability.

CUTLASS FP4 (E2M1) training with block scaling, stochastic rounding, and Hadamard transforms for stability.

QLoRA

BitsAndBytes, FP8 and NVFP4 dynamic quantization to help maximize SOL on Ampere/Hopper/Blackwell hardware.

The full-stack AgentOps platform — built on the open-source core.

Autonomous Agent Runtime

Compose agents from skills, tools, MCP servers, and custom LLMs. Agents reason, coordinate sub-agents, and execute complex workflows — deployed as containerized Kubernetes applications.

Full Agent Observability

Every agent run generates a complete execution trace — LLM calls, tool invocations, sub-agent steps, memory operations, errors. Visual trace viewer, session replay, anomaly alerts, performance dashboards.

Continuous Improvement Loop

Collect production traces → convert to training datasets → fine-tune Specialized Language Models (SLMs) from agent trajectories → evaluate → promote to production. Agents improve automatically over time.

High-Performance Training & Serving

Full fine-tuning, LoRA/QLoRA, RL alignment (GRPO, DPO, PPO), multi-GPU/multi-node. GPU-accelerated inference with vLLM, KV-cache offloading, tensor parallelism, LoRA adapter stacking.

Data Hub — Git-style Artifact Registry

Central versioned registry for models, datasets, agent definitions, skills, and tools. Git-style branches, commits, tags, PRs, diffs. Import/export from HuggingFace and ModelScope. Single source of truth across the entire platform.

Below is a minimal flow: install the package, create a small YAML config, and start a supervised fine‑tune.

Run the following command:

curl -sSL https://surogate.ai/install.sh | bash

This installs the CLI so you can run training with simple commands.

*Requires Ubuntu 24.04 x64 with CUDA 12.8/12.9/13.0

Start the SFT job using your config:

surogate sft examples/sft/qwen3-lora-qbnb.yaml

Output

Checkpoints, logs, and artifacts are written under output_dir.

model: Qwen/Qwen3-0.6B

output_dir: ./output

# training

per_device_train_batch_size: 2

gradient_accumulation_steps: 4

sequence_len: 2048

learning_rate: 2e-4

# LoRA / QLoRA

lora: true

lora_rank: 16

# qlora_fp8: true # optional, hardware-dependent

# qlora_fp4: true # Blackwell+

datasets:

- path: "mlabonne/FineTome-100k"

type: auto

Swap the model

Use any supported base model you want to fine‑tune.

Tune sequence length

Set sequence_len to fit your GPU memory + target task.

Enable QLoRA

Flip qlora_fp8 or qlora_fp4 when your hardware supports it.

Runs on Linux with an NVIDIA GPU, recent drivers, CUDA (12/13), NCCL, and cuDNN. GPU support spans multiple architectures.

From sm80 to sm121 (including Hopper & Blackwell generations).

Want deeper examples?

Browse docs and curated examples for recipes and model‑specific settings.